The relational and columnar design of kdb+, the world’s fastest time series database and real-time analytics engine, enables exceptionally fast analytics on large scale datasets in motion and at rest. This has made it the technology of choice for capital markets applications and industrial IoT applications involving large amounts of time-series data. This is due to the way data is optimally stored for manipulation and querying of time-series data and relational data in the programming platform. Although there are other columnar databases in the market, there are no databases that combine all of these aspects together.

This optimization enables kdb+ to deliver orders of magnitude better performance when working with sensor and other types of time-series data compared to alternative technologies. Recent performance benchmark results include:

- Ingesting, sorting and writing 100GB of ICE quote data, 1.2 billion records, in 25 minutes on AWS T2 x 2 – 32GiB RAM – EBS GP2 Storage

- Calculating 1-minute aggregated volume over 117GB in 15 seconds over single core 64GiB RAM on NVME Storage

- Average throughput 35,804 ticks per second (0.0224ms latency) on a single kdb+ CEP engine

The results indicate a hundred times better performance than rivals at around one-tenth of the cost, while simultaneously reducing cloud infrastructure and energy costs to around one-hundredth of existing spend.

Why is kdb+ so fast for data ingestion?

What makes kdb+ unique is that as an in-memory, time-series database it enables data to be ingested and made immediately available for queries. This makes it ideal for industrial IoT applications for ingesting, storing, processing, and analyzing time-series data – including IoT sensor data used in manufacturing and financial market data.

To achieve this level of performance, data is first placed in in-memory table(s) using a prescribed schema and protected through an on-disk log. By going to memory first, and making data available immediately for query, it enables kdb+ to support much higher ingestion rates of many millions of readings per second, hundreds of MBs / second, many terabytes per day on a single server than other technologies.

As memory is consumed, data is migrated from the in-memory database called the real-time database (RDB) to queryable temporary table(s) on disk called the IntradayDatabase (IDB). The IDB is partitioned by any configurable time interval, commonly 5, 10, 30, 60 minutes depending on the volume and available RAM. The data is then further organized, sorted, and migrated to more permanent storage on disk database tables that we call the Historical Database (HDB). The IDB and HDB can utilise various and tiered storage media such as solid state drives (SSD), hard disk drives (HDD), storage area networks (SAN), network attached storage (NAS), and parallel file systems, providing options to customers to optimize performance and cost of storing their data.

This ingestion process exploits both the performance advantage of sequential-write operations to disk, and making data immediately available from memory, thereby delivering orders-of-magnitude better performance than other technologies. Also, the structure of the database tables (columnar format) allows for bulk writes to tables on disk, which allows for more efficient ingestion of data.

With this approach, we are able to support large data volumes with less infrastructure, particularly where the daily volume exceeds RAM on a single server, while delivering exceptional query performance. The other added benefit is that organizations can avoid making copies of data for analysis when a single system can support both real-time and historical analytics applications.

Why is kdb+ so fast for queries?

The three primary reasons why kdb+ is so fast are:

- kdb+ is a vector-oriented database with a built-in programming and query language, q

- The entire kdb+ database and query language have a very small footprint (800 KB)

- kdb+ is optimized for data storage

Each of these three factors make kdb+ fast, but combined, they make it even more powerful. Although there are other time-series, columnar or vector databases on the market, there are no databases that combine all these aspects together. What are the specific advantages?

- The vector approach allows simultaneous operations on multiple data points at a time, so you reduce the number of operations required to achieve something. This eliminates the need for repeat operations on each piece of data, and greatly reduces overhead.

- With a built-in programming and query language, analytics are performed “in database” without the need to move data over a network or to another computation or analytics layer. kdb+ performs computations, aggregations, and filters in the database.

- The small footprint of kdb+ (800KB) allows the full scope of q operations to reside in the fastest area of the CPU (L1/2 cache), so operations exploit its speed inherently.

- Columnar representation of data is much more efficient for queries, as data retrievals are much more targeted to the elements of the data you need, as opposed to the full scope of the data. This greatly reduces the amount of scanning and retrieval of data that aren’t required.

- Storage of data on disk as memory mapped files, so that the database is not translating data from an on-disk representation to memory. This helps eliminate CPU operations required for translating on-disk objects to in-memory objects common with other technologies.

- Operations and joins on time-series and relational data, along with native support for time-series operations, vastly improves both the speed and performance of queries, aggregation, and the analysis of structured and temporal data. Some of the operations include moving window functions, fuzzy temporal joins, and temporal arithmetic.

- Multiple tiers of storage – RAM, SSD, HDD – to optimize performance and cost based on use case. For example, most important and most frequently accessed data can benefit from being placed in RAM and SSDs to deliver sub-millisecond response times.

Measuring the results with kdb+

As we have shown, kdb+ comes with a programming system optimized for high-performance manipulation and querying of time-series data and relational data. This optimization enables kdb+ to deliver order-of-magnitude better performance when working with sensor and related data compared to alternative technologies.

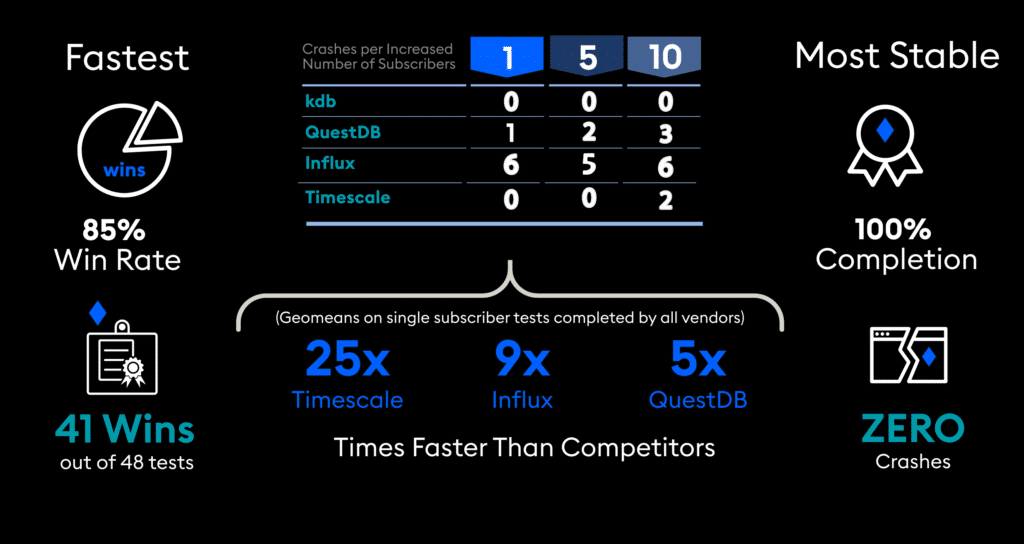

The Time Series Benchmark Suite (TSBS) is set of independently reproducible tests for comparing the performance of Time Series databases. The DevOps use case is used to generate, insert, and measure data from 9 ‘systems’ that can be monitored in a real-world scenario (e.g., CPU, memory, disk, etc). Sample results from the benchmark are illustrated below:

Further information on these results and other benchmarks are available here. For completely independent and audited performance benchmarks, the Security Technology Analysis Center Benchmark Council has a number of tests comparing low-latency, high-volume technologies; kdb+ features well in STAC’s results. You can visit STAC at https://stacresearch.com.

Conclusion

The velocity and volume of data continues to grow, along with the need for performing analyses ever faster, challenging traditional approaches and databases that were never designed to support these demands. For example, we are seeing data volumes and data rates increase by 10x to 100x across a wide range of industries. In manufacturing facilities, higher frequency sensors (100kHz to 1MHz) are capturing vastly more granular data. In the automobile industry, more sensors are being deployed (thousands to millions) throughout individual vehicles. Organizations like these are analyzing significantly more data faster, so that they can deliver better products and user experiences to their customers.

kdb+ is ideally suited for these demands because of its unique combination of a higher performance in-memory, columnar and relational database with an integrated vector-oriented programming system. Our customers are using kdb+ to get significant improvements to the performance and scalability of their applications in the face of these data volumes, particularly for supervisory control and data acquisition, data historians, fault detection and prediction, advanced data warehouses, and capital markets trading and surveillance systems.